How I Fixed Context Windows

Or, Better Than a 1M Context Window.

You don’t have to use LLM coding agents much before you understand the problems with long-running sessions. First it gets a little slower. Then a little sloppier. Then it starts making weird and nonsensical choices.

This happens because the context is full of dead ends, stale plans, bad tool output, revised choices, and facts that were wrong the first time and somehow got promoted to scripture anyway. You correct it, but it still thinks the first, incorrect version is gospel.

Then auto-compaction rolls in with the delicate touch of a backhoe sent to organize a watchmaker’s bench. Your entire session gets replaced with a summary. The summary is inevitably missing a bunch of critical details that you’ve already painstakingly covered, and now all of that is just forgotten. At that point, you’re frequently better off completely starting over from scratch.

We’ve developed techniques for working around these limitations, and we’ve also developed an anxiety around filling up that context. The moment we see the LLM run a build script that produces pages and pages of mostly useless output, we start getting irritated because we already told it to use our special build script that hides that stuff.

Some models have a larger context window, but that’s not all it’s cracked up to be. A huge context window gives the model room to carry more history. That includes useful history, and it also includes junk. Old tool output, abandoned approaches, resolved bugs, stale assumptions, and conversational debris all stay in the context, where they keep affecting later turns.

Pruning the junk

What if all this could be improved? What if we could just tell the LLM, “hey, you can just forget all that build output,” and magically that content was removed from the context? Even better, what if the LLM itself realized this and just did it automatically? What if after a long debugging session, the LLM finally fixed a difficult issue, then before it moves on, it prunes away that lengthy debug session and just leaves behind a summary of the important bits it figured out?

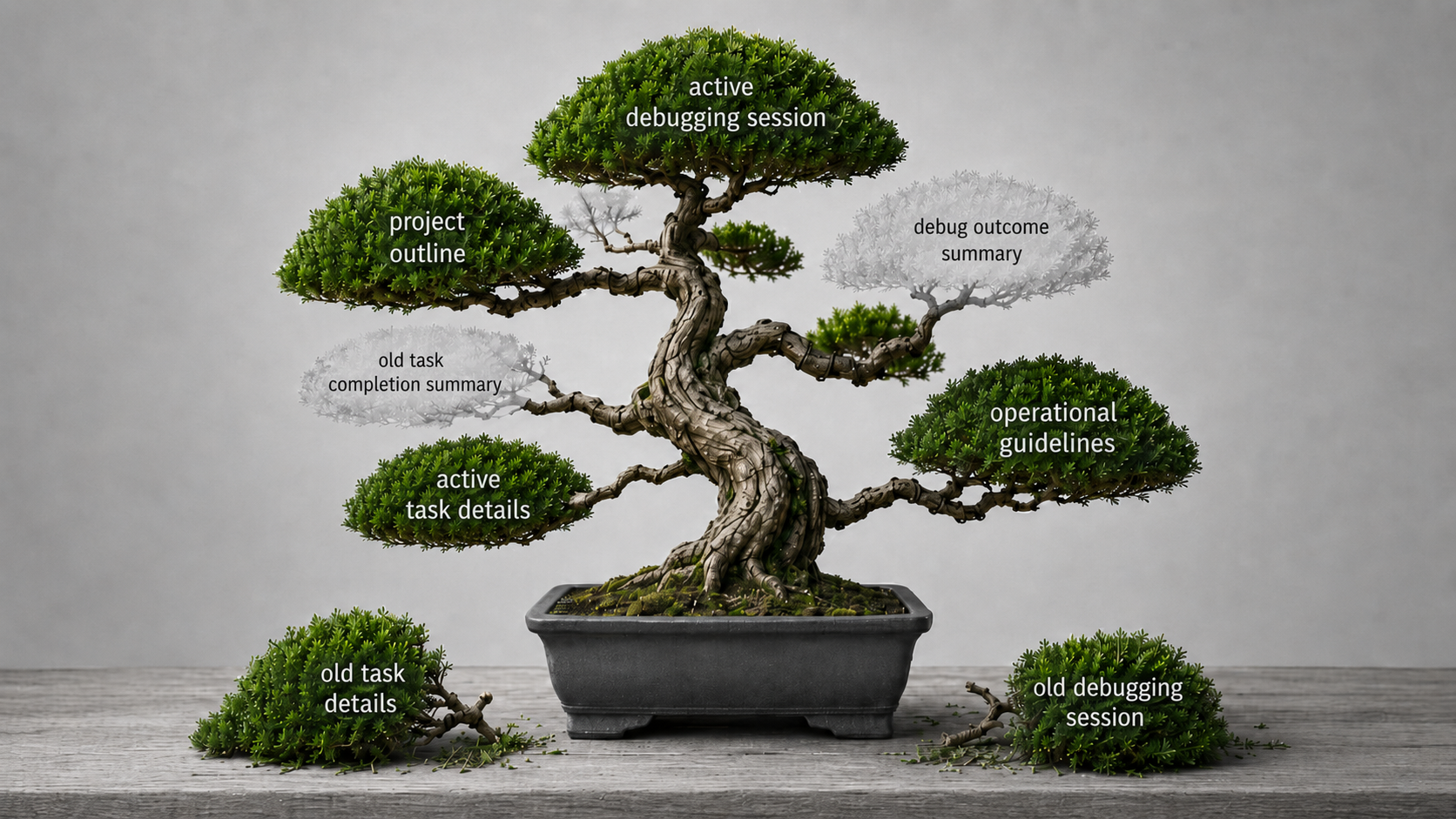

That is why I made Context Bonsai. The idea is simple. Let the model archive older completed parts of the conversation, leave behind a compact summary placeholder, and bring the full details back later if they become relevant again. That keeps old junk out of the active prompt without throwing it away completely.

So the session has a chance to stay cleaner as it grows. Solved problems can move out of the way. The important thread stays visible. Archived details are still there when they are actually needed.

What stays in context

The LLM is given guidance about what should stay in the context. Any uncompleted goals stay. Unresolved work stays. Initial orientation discussion setting up the purpose for the session stays. Any clearly stated rules or guidelines about how to operate stay.

Otherwise, oldest content is prioritized for removal. The LLM is occasionally fed an ephemeral system message inviting it to consider whether there are any completed tasks or threads that can be pruned away. You get to have a session that is tighter, more focused on the task that actually needs to be done, and the session lasts significantly longer before hitting the auto-compaction wall.

Try it

If you want to try it, start at the hub repo. That is the front door. It explains the idea, links to the harness-specific implementations, and points to the current state of each port.

If you use it, I’d love some feedback. I’m especially interested if you see it prune content it shouldn’t have or not prune content it should have. Did you find it helpful? Did the prune tool ever encounter failures?

The main reference implementation is the OpenCode version. There’s also a Claude Code version that I’ve tested and seems to work fine, but I don’t use Claude Code as my primary harness, so it has less testing. There are also other harnesses that I’ve only started working on, but I haven’t tested or reviewed them yet.